Table of Contents

Currently, there is no foolproof way to prove whether a piece of content was written by AI or by a human. Despite what many detector tools claim, no AI detector is 100% accurate. In fact, growing evidence from researchers, educators, writers, and editors since 2023 shows that these tools are highly unreliable and produce inconsistent results.

The truth is that these AI detectors aren’t actually determining whether AI wrote the content. There is no way to do this accurately unless the writer left their prompts on the document. Instead, they analyze patterns and sentence predictability to estimate whether something looks AI-like.

Part of the problem is also the type of writing being tested. A Reddit or Facebook post, for example, is naturally messy, casual, and inconsistent. A student essay or professionally written marketing article on the other hand, is usually more formal, structured, and impersonal by design. Ironically, that kind of polished structure can make it more likely to be labelled as AI by these detectors, even when it was written entirely by a human.

Which means that unfortunately, well-structured or professionally edited writing from real humans can often get flagged simply because detection tools come up with their results by analyzing billions of pieces of content across the web, including material that existed long before AI.

What We Know About AI Detection Tools

In reality, LLMs (Large Language Models) simply learn patterns from existing content – much of it written by the same professional writers producing content today. We test many of these detection tools ourselves, running content through platforms like Grammarly, Copyleaks, Winston AI, and ZeroGPT to see how they interpret real human writing. Plenty of times when we scan something, we’ll receive inconsistent results like:

- Fully human-written, original content flagged as AI

- Copied and pasted content directly from ChatGPT is marked as 100% human by AI detectors

- Mixed content (AI drafts with human editing) completely confuses AI detectors

Because of this, there is no technology today precise enough to make definitive judgments.

This is also why more and more university professors, publishers, and companies today refuse to rely on AI detection tools when evaluating submitted content. At best, these tools can serve as an initial reference point for editors. However, only those with strong writing experience and developed content literacy can properly assess the results and investigate further before making any conclusions.

Studies That Show AI Detection Tools Are Highly Inaccurate

Academic studies analyzing six major AI detection tools found that the systems correctly identified AI-generated text only 39.5% of the time, highlighting the systems’ unreliability. The researchers also found that when AI-generated content was edited or paraphrased, detection accuracy dropped even further – in some cases as low as 17%.

Even the companies building AI technology acknowledge the limitations of detection tools. According to a recent study by OpenAI, attempts to train reliable AI detectors have produced significant false positives. In their testing, AI detectors incorrectly flagged well-known human writing – including works by Shakespeare and even the US Constitution, written in the 1700’s – as AI-generated!

OpenAI also noted that these systems may unfairly impact certain university students today. Writing from people who learned English as a second language or who use more structured, formulaic language is more likely to trigger AI flags. Because of these reliability issues, many professors and universities are now moving away from AI detection tools, acknowledging that they cannot be used as definitive proof of AI use.

MIT Sloan’s Teaching & Learning Technologies group also cautions against relying on AI detection tools. They note that AI detection software is “far from foolproof” and can lead instructors to falsely accuse students of misconduct.

Based on Our Own Tests

In one of our many tests scanning different AI detection tools, we’ve taken one piece of fully human-written blog content and run it through multiple AI detection tools to see how consistent the results are. The outcomes were polar opposites.

One detector, Copyleaks, reported the text as 98% AI, while Winston AI marked the exact same passage as 0% AI.

When the same text can produce such drastically different results across tools, doesn’t it raise the obvious question of: Well, then which one is correct? While this is only one of the many examples we’ve seen since these tools have come around, it reflects a pattern of variability that raises questions about how dependable they really are.

In another test, the opposite scenario produced equally confusing results. We took a blog post generated entirely by ChatGPT, copied it verbatim, and ran it through several AI detection tools. Surprisingly, Grammarly marked the content as 100% human-written, while ZeroGPT detected only 14% of it as AI-generated.

When a piece of text produced entirely by an AI model can fool certain AI detectors into believing it is completely human, it further exposes the limitations of these systems. Just like human-written content can be falsely flagged as AI, AI-generated content can also slip through undetected.

Using THIS Blog as a Case Study

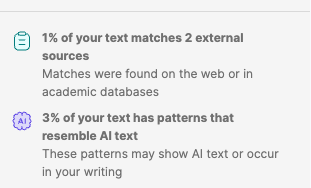

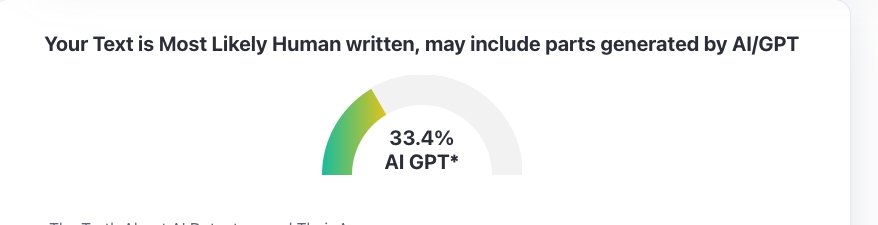

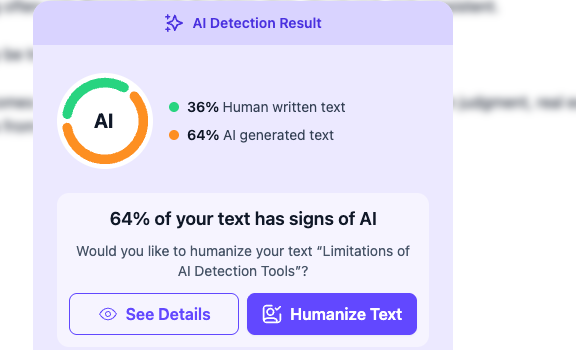

Now, let’s use this very blog as the most recent example, which was fully human-written. We’re going to compare Grammarly’s paid AI detection feature with the most popular AI detection tool today, ZeroGPT. Both tools, by the way, claim to be 99% accurate in detecting AI content.

We ran this through our paid Grammarly Premium membership and got 3% AI pattern recognition, with only 1% of matches found on the web.

When pasting the same article into the free ZeroGPT however, it detected 33.4% as AI-generated.

Just to make sure, we also tested a newer AI detection tool called TextGuard AI (one of many platforms entering the market). It produced a very different result, claiming the same content was 64% AI-generated. Like many of these tools, it also offers a feature to “humanize” text if you pay for a subscription. But if I used that feature on my original content, wouldn’t that make it technically AI-generated text?

So, suppose one tool can supposedly have its AI code rewrite your content to make it appear human, how can other AI detectors claim to identify AI with absolute accuracy? Make it make sense!

So in reality, if all of these tools claim to be 99% accurate and we get wildly different results from scanning the same document, how can anyone prove which one is right or wrong?

AI Detectors Are Pattern Analyzers

AI detection tools are trained using large datasets of writing collected from the internet, academic papers, and AI-generated samples. By studying these datasets, the systems attempt to learn statistical patterns that might indicate whether text was written by a human or produced by an AI model.

In practice, this means AI detectors are not identifying a definitive “AI signature.” Instead, they are making predictions based on patterns found in publicly available writing. They analyze factors such as sentence structure, vocabulary predictability, and phrasing styles against what they’ve seen in their training data.

How does this further prove inaccuracy?

The problem is that this training data includes all kinds of writing found across the internet, such as:

- Existing blog posts and marketing content

- Academic papers and research articles

- News reporting and professional journalism

- Classic literature and historical documents

Because of this, AI detectors often attribute certain writing traits to AI — even when those traits reflect good writing practices. For example, these tools will flag content that includes:

- Clear sentence structure

- Strong grammar and punctuation

- Consistent tone and organization

- Concise, predictable phrasing

Ironically, these are the exact qualities most writers are taught to aim for. As a result, writing that does all the right things is being flagged as AI simply because it matches patterns the detector learned from large datasets of internet text.

In fact, one of the more recent common, and arguably silliest, internet myths about quickly spotting AI writing is that it uses em dashes(—) or bullet points (as I just did). But in reality, these are standard formatting tools used in journalism, copywriting, and academic writing to improve clarity and readability. Something experienced writers have been doing long before AI tools existed. So, as writers, do we purposely avoid these long-proven effective tools to improve readability for the sake of not getting accused of AI use?

The Irony of AI Detection

When it comes to our tests with these AI detectors, ironically, one of the easiest ways to avoid AI-detection flags is to write with intentionally poor copywriting practices. We’re talking about adding awkward phrasing, inconsistent grammar, spelling mistakes, and overly lengthy, uneven sentence structures. Or, as mentioned in the intro, writing content like it’s meant for a Facebook buy/sell group or Reddit thread. These imperfections break the predictable patterns that AI detectors often look for, because these tools can’t spot patterns from ‘bad’ writing practices.

But that’s not what you, as a client hiring a digital marketing agency to produce content, would want is it? Businesses hire writers to produce clear, polished, professional content that converts. Not sloppy articles designed only to trick a detection tool.

The Best AI Detector Is Still an Experienced Writer

What often gets overlooked is that the people most capable of recognizing AI patterns are still the experienced human writers and editors. People who spent years working with language before 2023 (when AI writing became common) can often notice patterns such as overly uniform sentence structure, predictable transitions, or vague authority.

That kind of recognition comes from years of copywriting experience gained through reading, editing, and producing large volumes of writing, whether technical or creative.

Our Verdict on AI Detectors

AI detection tools may seem like a quick and easy answer for identifying AI-generated writing, but the reality is far more complicated. As research, testing, and even statements from AI developers have shown, these tools are far from reliable. False positives, inconsistent results across different AI detectors, and the inability to distinguish between strong human writing and AI patterns all make it difficult to treat their findings as proof.

In the end, evaluating writing still requires something no detector can fully replicate: human judgment, experience, and real content literacy.

Determining if AI use in content isn’t this impossibly convenient copy-and-paste solution. If we were to rely on these tools as “evidence,” it could mean punishing a capable writer who’s providing original, well-polished, professional work. This only poses a risk to relationships between clients and their marketing team, professors and students, and anyone involved in day-to-day working relationships where writing and communication are part of the process.

Instead, it requires a trained human eye – someone with real writing and editing experience who can evaluate tone, structure, and context – well before ChatGPT and the many detector models that followed. Which is why thoughtful human review is still essential.